Action Face:

Streamlining 3D Avatar Customization

for Instant Engagement

A playful yet efficient experience that gets users to their Action Face—fast.

Overview

Action Face is an app that lets users scan their face, customize a 3D avatar, and order a personalized action figure. This project focuses on the post-scan customization experience within the iOS app—designed for both high-traffic events and at-home users. Built to deliver fun fast, the app guides users through quick hair and outfit selections while their scan processes, leading up to a magical face reveal and an option to buy their figure.

Challenge

In fast-paced environments like NBA games, users didn’t want to spend time fine-tuning every detail of their avatar—they wanted instant payoff. We needed to deliver the magic moment—seeing their own face on a 3D avatar—as quickly as possible. But offering too many customization options up front risked slowing them down or diluting the excitement.

This project focused on designing a mobile-first iOS app experience, whether users scanned a QR code or used an in-event iPad. Our challenge was to balance speed and delight: show users their “best self” quickly, without overwhelming them with choices—just enough to feel personal and fun.

How do we guide users to their best-looking avatar in seconds—before the magic wears off?

Goals

Minimize customization before the face scan reveal

Give users something fun to do while waiting (ex: picking hair + color)

Build anticipation for their avatar using the “pizza tracker” loading state

Allow post-reveal tuning for skin tone and details

Keep experience light, fast, and delightful for events and mobile users

Seamlessly drive to next steps: AR, sharing, or purchase

Hair Selection (Pre-Reveal)

While the face scan is being processed, users are instantly dropped into choosing hair and hair color. It’s the perfect distraction—and it feels like progress. This also gets people emotionally invested in their avatar before they even see it.

Select Body

(Pre-Reveal)

Next, users select a themed outfit or jersey, creating a recognizable, personal connection (especially at team-sponsored events). To reduce decision fatigue, users only see jerseys from the team they previously selected, prior to scanning—narrowed down to Home, Away, or Alternate options. This keeps the momentum going while making the choices feel tailored and relevant.

The Reveal

(3D Avatar Completion)

A “Hold on... magic is happening!” screen with a pizza tracker builds anticipation while the face scan finishes processing. Once complete, users are presented with their full 3D Action Face avatar—interactive, fully rendered, and wearing their selected team’s jersey. They can spin the avatar, see themselves in 3D for the first time, and choose what to do next: rescan if they’re not happy, buy now if they are, or keep customizing. This instant, playful payoff gave users a strong emotional moment while maintaining forward momentum in the experience.

Tuning Face & Bringing Your Avatar to Life

After the reveal, users can fine-tune their avatar by adjusting facial features like skin tone, brightness, and saturation—giving them a sense of control without overwhelming them. From here, they can enter AR mode, where their avatar appears in their real-world environment, animated and interactive. Whether they’re watching it strike poses or perform actions, this moment makes the experience feel truly magical—their avatar is now alive.

Testing & Iteration

Reducing Steps to Personalization

Before:

The original hair customization flow forced users into a back-and-forth loop:

Select a hairstyle → tap “Color” → choose a shade → tap back → return to hair options.

3 out of 5 participants gets lost in how to get back to changing hairstyle after landing in the color menu.

After:

Hair styles and colors are now presented in a unified, scrollable interface. Users can preview hairstyles and apply color in one seamless flow—without navigating away from the screen.

This adjustment significantly reduced interaction steps and improved clarity around available options. It also created a smoother and more enjoyable avatar creation experience, especially for users trying multiple looks.

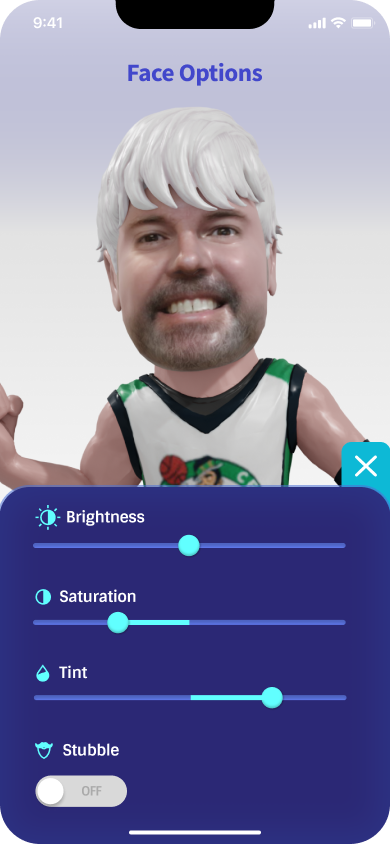

Consolidating skintone adjustments into a single control panel

Before:

Previously, users had to navigate through multiple steps to adjust skintone:

Tap “Face” → Select “Skin tone” → Open individual menus for Brightness, Saturation, and Tint.

5 out of 5 users we observed took a long time to figure out there’s three settings they go into to adjust brightness, saturation, and tint. Each setting was accessed separately, making the process slow and disjointed—especially for first time users.

After:

Skintone controls are now unified in a single, intuitive panel. Tapping the face icon opens a streamlined interface with sliders for brightness, saturation, and tint, along with a new toggle for stubble.

This redesign simplifies the user flow, enabling quick adjustments from one screen and offering more control without additional taps.

Outcomes

⚡️ Faster time-to-fun: Users engaged instantly with customization while their scan processed

🎯 90% of users continued past the reveal screen to keep playing

📹 90% of users recorded their AR video to share or relive the moment

🎉 Improved perceived speed through playful “pizza tracker” animations during scan processing

🛍️ Increased conversion rate by 60% by prompting “Buy Now” at the emotional peak—immediately after the face reveal and again after the AR experience